This article will focus on the Best KYA Tools for Verifying AI Bots and show how tools from OpenAI, Microsoft, and IBM assist with the identity, transparency, and security of AI.

The tools offer prevention for abuse, increased trust, and provide responsible use of these tools across various industries and digital ecosystems.

Why KYA Tools to Verify AI Bots

Helps Avoid AI Fraud and Impersonation

The KYA tools confirm the identity of the AI agents so potential threats of fake bots or harmful AI systems masquerading as genuine bots remain a problem. Microsoft and OpenAI solutions are quite popular in the AI security space and help to mitigate fraud.

Brings Transparency to the AI System

KYA solutions enhance the user’s understanding and trust in the AI system as KYA tools explain the functioning, in particular the decision making and data processing of the system.

Improves Security and Risk Management

AI Risk and Security frameworks, such as MITRE, identify and manage risk and vulnerabilities in AI integrated systems, especially to mission critical systems.

Fulfills Requirements of Various Regulations

With the KYA tools, businesses are able to meet their compliance obligations with the USA’s National Institute of Standards and Technology (NIST) and the European Union AI Act.

Steadfast Trust and Reliance

Due to the verification of AI bots, great trust and reliance on the automated systems in finance, healthcare, and customer care sectors has been achieved.

Uncovers Bias and Other Ethical Problems

To detect bias and for AI to behave ethically, tools from the likes of Anthropic and IBM need to be relied on.

Accountability of AI Systems

KYA solutions are important for accountability as they provide the needed trail and logging related to AI systems.

Safeguard AI Use in Enterprises

KYA tools provide enterprises related to their data and systems essential control to expand their AI use.

Key Point & Best KYA Tools to Verify AI Bots

| Tool | Key Point |

|---|---|

| OpenAI Verify | Ensures AI identity verification, usage transparency, and safe deployment tracking for bots and agents. |

| Microsoft Copilot Trust Center | Provides security, compliance, and data protection insights for AI copilots and enterprise deployments. |

| Hugging Face Model Card Validator | Validates AI model documentation, including bias, training data, and ethical usage disclosures. |

| Google DeepMind Transparency Portal | Offers insights into AI model behavior, safety practices, and responsible AI development standards. |

| IBM Watson Audit Suite | Enables auditing of AI decisions, ensuring explainability, fairness, and regulatory compliance. |

| Anthropic AI Safety Checker | Focuses on AI alignment, safety testing, and reducing harmful or biased outputs. |

| European Union AI Act Compliance Scanner | Helps organizations meet EU AI Act requirements with automated compliance checks and risk categorization. |

| MITRE AI Risk Framework | Provides structured risk assessment and threat modeling for AI systems and autonomous agents. |

| National Institute of Standards and Technology AI Transparency Standards | Defines guidelines for AI explainability, accountability, and trustworthy system design. |

| CertiK AI Audit | Specializes in auditing AI-integrated blockchain systems for security vulnerabilities and trust assurance. |

1. OpenAI Verify

With OpenAI Verify, the focus is on verifying AI agents to ensure that AI agents are only authenticated to be operating under verified identities and in controlled setups. Companies can monitor the way a model is used and set API-based usage controls and limitations to prevent impersonation or bad automation.

With the addition of monitoring dashboards and identity layers, both trust and accountability between user and AI are greatly improved. In the middle of enterprise’s deployment strategies, Best KYA Tools to Verify AI Bots like OpenAI Verify offer a necessary tool to guarantee compliance to AI’s new and emerging governance guidelines.

This tool is one of the most effective for ensuring compliance and audit safety for businesses that are deploying conversational AI.

OpenAI Verify Features, Pros & Cons

Features

- Verification & authentication of AI’s identity

- Monitoring/tracking API usage

- Layers of moderation and safety for content

- Interaction AI audit logs

- Ready infrastructure for compliance

Pros

- Security and reliability

- Enterprise AI systems scalability

- API integration

- Monitoring capabilities

- AI encourage responsible practices

Cons

- Opacity regarding the systems’ ins and outs

- Large-scale usage may be expensive

- Technical setup is needed

- OpenAI’s ecosystem remains a dependency

- All industries may not offer complete customization

2. Microsoft Copilot Trust Center

Microsoft Copilot Trust Center establishes a comprehensive architecture for credentialing AI copilots within enterprise ecosystems, including data protection, compliance, and security along with transparency regarding the AI’s access and the data utilized. This allows organizations to evaluate AI’s decisions and exercise control in a way that is compliant with relevant regulations.

In positioning one of the Best KYA Tools to Verify AI Bots like Microsoft Copilot Trust Center within the AI Governance framework, organizations can ensure the safety of human-AI collaboration. This tool is integrated with enterprise applications, is fully compatible with Microsoft 365, and is one of the most effective in preserving the balance between trust in the AI’s productivity features and maintaining control over data.

Microsoft Copilot Trust Center Features, Pros & Cons

Features

- Security and compliance controls of Enterprise grade

- Access and permissions governance management

- Filters for safety of content (built-in)

- Evaluation tools of performance and risk of AI

- Protection of data privacy within Microsoft ecosystem (Microsoft Learn)

Pros

- Enterprise and highly secure

- Microsoft 365 integration is strong

- Compliance and governing tools advanced

- Feedback and monitoring systems continual

- Strong evaluations of AI safety (Microsoft Learn)

Cons

- Users within Microsoft ecosystem suit best

- Monitoring and setup is ongoing

- Subscription-based pricing

- Microsoft tools offer limited flexibility

- Beginners face complex setup

3. Hugging Face Model Card Validator

With Hugging Face Model Card Validator, AI models include documentation that is consistent and clear. The Validator assesses documentation about training datasets and biases, along with the model’s intended use and ethical implications, to help ensure AI bots are responsibly built and used.

Midstream AI lifecycle management, Best KYA Tools to Verify AI Bots like Hugging Face Model Card Validator assists developers and companies regarding the trustworthiness of models prior to integration. The Validator promotes ethical AI use and transparency, and gives the users the power to decide whether to use AI agents through reproducibility.

Hugging Face Model Card Validator Features, Pros & Cons

Features

- Documentation of the model of AI standardized

- Disclosure of bias and fairness

- Dataset and training transparency

- Compatibility (open-source)

- Tools for reporting ethical AI

Pros

- Support for safety and transparency

- Targeted for researchers and developers

- Good for free and open-source

- Positive AI ethics influence

- Integration and usability

Cons

- Weak compliance enforcement

- Developer bias is required

- Security tool is incomplete

- No active monitoring

- Less focus on enterprises

4. Google DeepMind Transparency Portal

Google DeepMind Portal gives explanation on how complex AI systems work and how they are trained, including the tools used to keep them safe. The portal clears the fog around complex models and provides documentation that explains how to safely test the models.

This kind of documentation is needed to ensure AI bots are built and used ethically and responsibly. In the new era of AI governance, Best KYA Tools to Verify AI Bots like Google DeepMind Transparency Portal help the public to trust AI systems. The portal is particularly valuable to researchers and companies who want to ensure AI systems that they use are safe, trustworthy, and used responsibly.

Google DeepMind Transparency Portal Features, Pros & Cons

Features

- Insights on AI actions and safety

- Model documentation and evaluation

- Disclosures on risk and ethics

- Transparency tools backed by research

- Raw data on AI accountability

Pros

- Strong concentration on AI ethics

- Better research and insights

- Transparency boosts trust

- Helpful to researchers and policymakers

- Responsible AI growth

Cons

- Insufficient tools for enterprise integrations

- No complete verification system

- More informational than actionable

- Data visibility is limited to few

- Technical knowledge required

5. IBM Watson Audit Suite

IBM Watson Audit Suite assesses AI systems for fairness, explainability, and compliance. Organizations can use it to audit AI decision-making in real time, find bias, and prepare compliance reports. Tools that audit AI systems with real-time bias detection and reporting to regulators are especially important in high compliance industries like finance and healthcare.

Within enterprise risk management, compliance and audit AI tools like IBM Watson Audit Suite help ensure AI operates ethically and transparently. Its regulatory analytics and governance capabilities provide tools to audit AI agents and to maintain responsibility in sophisticated AI systems and their use.

IBM Watson Audit Suite Features, Pros & Cons

Features

- AI auditing and explainability

- Fairness and bias evaluation

- Compliance with regulations

- Monitoring and risk management

- Risk management

Pros

- Compliance and governance assistance

- Better for regulated industries

- Strong analytical and reports

- Good enterprise option

- Increased AI accountability

Cons

- Small business affordability is poor

- Complicated setup

- Specialized personnel is needed

- Major infrastructural demands

- Low flexibility for startups

6. Anthropic AI Safety Checker

The Anthropic AI Safety Checker strives to ensure that AI systems exhibit behavior in accordance with human values and safety practices. This tool assesses potential harm, bias, and other negative impacts that an AI system can cause, which is important for ensuring that the system is deployed in a responsible manner.

Safety and control are the primary focus of this tool, which is important to confirming AI bots. Within the safety framework of AI, compliance and audit AI tools like the Anthropic AI Safety Checker aid in the reduction of risk of using autonomous agents. Tools with a safety design encourage developers to create AI systems that are reliable, ethical, and unlikely to be abused in real-world situations.

Anthropic AI Safety Checker Features, Pros & Cons

Features

- Testing alignment and safety of AI

- Detection of harmful outputs

- Bias and risk assessment

- Controlled response strategies

- Tools for validating ethical AI

Pros

- Robust AI risk mitigation

- Responsible AI development

- Advanced AI technologies

- Continuous improvement for safety

- Less harmful AI outputs

Cons

- Tools for enterprises are limited

- Partial tools offered

- Less attention to compliance reporting

- Effort may be needed for integration

- Competitors have wider scope

7. EU AI Act Compliance Scanner

The EU AI Act Compliance Scanner is helping businesses understand if the AI technologies comply with the requirements set by the EU for the AI Act in the business. It assists in identifying compliance steps by classifying AI technologies and suggesting compliance steps. This tool is a necessity for businesses in the EU and European oversea targeted businesses.

For Regulatory compliance in the workflow, Best KYA Tools to Verify AI Bots such as EU AI Act Compliance Scanner ensure that the AI bots are legally compliant. They help manage regulations, ensure compliance, and build trust by having legally compliant AI that is governed and compliant with responsible AI regulations and global governance regulations.

EU AI Act Compliance Scanner Features, Pros & Cons

Features

- Classification system for AI risks

- Evaluation of compliance to regulations

- Tools for documentation and reporting

- Recommendations for the mitigation of risks

- Compliance with the EU AI Act

Pros

- Compliance with laws is maintained

- Risks related to regulations are minimized

- There is a consistent framework

- The framework is applicable to international businesses

- Trust and transparency are increased

Cons

- The focus is primarily on regulations of the EU

- There are complicated legal regulations

- Compliance requires expertise

- The system is not a tool for technical security

- When updates are made changes are needed.

8. MITRE AI Risk Framework

MITRE AI Risk Framework is a tool that describes and guides a user in addressing the risks presented by the use of AI technologies. It does so through the use of threat modeling, risk assessment, and other risk mitigations strategies designed for AI technologies.

It would be especially practical for an organization that has sensitive AI technologies and may even consider the AI to be of a critical operational nature. For cybersecurity and risk management, especially in the AI ecosystem, Best KYA Tools to Verify AI Bots such as MITRE AI Risk Framework help assess trust in AI.

It attempts to provide the answer to the operational and other risks, by helping mitigate and design AI technologies and associated operational processes for their resilience.

MITRE AI Risk Framework Features, Pros & Cons

Features

- Tools for the modeling of AI threats

- Methodologies for the evaluation of risks

- Analysis of security and vulnerabilities

- Management of operational risks

- A comprehensive approach to the governance of AI

Pros

- There is a significant emphasis on cybersecurity

- The evaluation of risks is comprehensive

- The framework is suitable for critical systems

- The framework is open for customization

- The framework supports enterprise use

Cons

- There is a need for technical expertise

- There is no direct automation

- The system is complicated for beginners

- The time required for implementation is significant

9. NIST AI Transparency Standards

NIST AI Transparency Standards lay out the basic frameworks for building trusted and explainable AI systems. They guide the formation of accountability and imprvoed AI system interpretability. Such standards are practiced across all industries.

For the management and compliance frameworks, Best KYA Tools to Verify AI Bots like NIST AI Transparency Standards are the standard for assessing AI systems. NIST standards enable organizations to assess AI bots that evolve to meet and exceed the ethical, legal, and operational requirements and standards of great technological advancement.

NIST AI Transparency Standards Features, Pros & Cons

Features

- Best practices for documentation

- Standards on accountability

- Governance frameworks

- Explainable AI frameworks

- Risk management

- Trustworthy AI frameworks

Pros

- Increased AI explainability

- Global adoption

- Supports compliance with regulations

- Flexible for different fields

- Facilitates governance for the future

Cons

- Not self-sufficient

- High implementation cost

- Lacks automation

- Organization commitment required

- Can be overwhelming for small teams

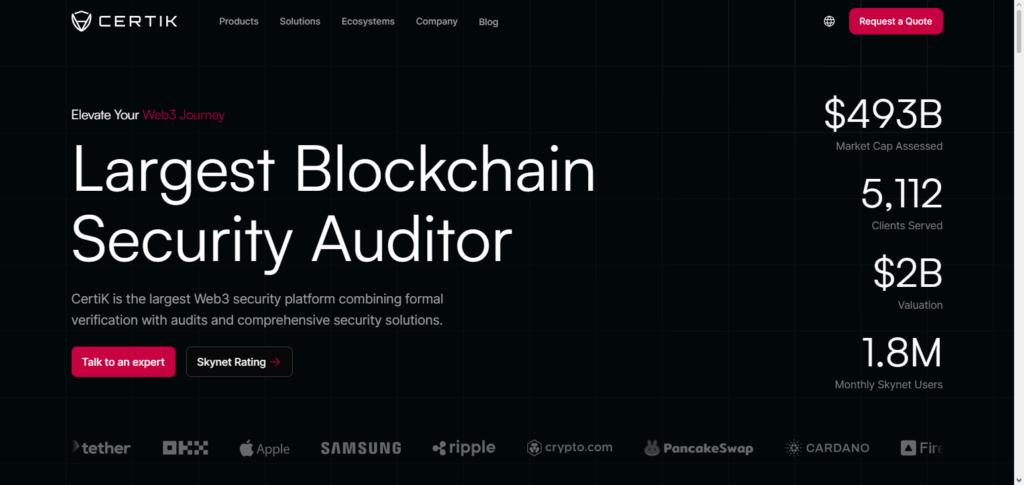

10. Certik AI Audit

CertiK AI Audit focuses on auditing AI systems that are incorporated with blockchain and Web3 technologies. It specializes in vulnerability identification, code protection, and the auditing of AI smart contracts.

This tool is especially useful for decentralized applications that require a high level of trust. Within the AI-integrated blockchain technology space, Best KYA Tools to Verify AI Bots like CertiK AI Audit are the best tools for protection and verification. With the combination of AI auditing and blockchain technology, AI bots are driven to operate in a secure, transparent, and exploitable decentralized environment.

CertiK AI Audit Features, Pros & Cons

Features

- Smart contracts and AI auditing

- Tools for detecting vulnerabilities

- AI-based security for blockchains

- Security monitoring and control

- Reports and scores on security

Pros

- In-depth auditing service

- Protects against vulnerabilities

- Extensive knowledge in blockchain

- High-quality security auditing

- Trusted in the decentralized finance

- Comprehensive reports on audits

Cons

- High cost for limited use cases on blockchains

- Not suited for classical AI systems

- Technical knowledge required

- Less about AI ethics and compliance

Conclusion

The growing incidence of automation for AI agents highlights the importance of trust and verification. Tools like OpenAI Verify, Microsoft Copilot Trust Center, and NIST Frameworks provide a first step at compliance and other protective measures. KYA Tools provide protection for enterprises and the communities downstream with the ethical use of automation for artificial intelligence.

The ethical use of AI hinges on trust and verification. With the adoption of KYA tools, trust will be forged with communities and compliance with regulators will be assured with the verification of the systems. Trust tools will be pivotal in protecting systems and driving ethical AI via KYA tools.

FAQ

What are KYA tools for AI bots?

KYA (Know Your Agent) tools are solutions designed to verify the identity, behavior, and trustworthiness of AI bots. Platforms like OpenAI Verify and Microsoft Copilot Trust Center help ensure AI systems operate securely and transparently.

Why is verifying AI bots important?

Verifying AI bots helps prevent fraud, misinformation, and misuse of automated systems. It ensures accountability, improves user trust, and aligns AI operations with ethical and regulatory standards set by organizations like National Institute of Standards and Technology.

Which are the best KYA tools to verify AI bots?

Some of the best tools include OpenAI Verify, Microsoft Copilot Trust Center, Hugging Face Model Card Validator, and IBM Watson Audit Suite, along with compliance frameworks from MITRE and European Union.

How do KYA tools ensure AI transparency?

KYA tools provide audit logs, model documentation, risk assessments, and explainability features. Solutions like Google DeepMind Transparency Portal and Anthropic Safety Checker allow users to understand how AI systems make decisions.