I will explain the Small Language Models for Web3 Leaders. These are tiny AI models that help Web3 Projects save money by aiding in cost-effective data analysis, community interactions, and smart contracts.

Small Language Models help Web3 Leaders to make quicker and more intelligent decisions, and Specialize resources to make the best utilization of the Small Language Models for Web3 Leaders.

Key Point & Small Language Models for Web3 Leaders List

| Model | Key Points |

|---|---|

| Mistral‑7B | Open-weight model; optimized for efficient inference; strong multi-task performance. |

| Falcon‑7B | High-performance open model; good for instruction-following tasks; low latency. |

| Phi‑2 (Microsoft Research) | Research-focused; optimized for reasoning and code generation; bilingual capabilities. |

| GPT‑NeoX‑20B (scaled down) | Open-source GPT-style model; strong language understanding; scalable architecture. |

| Alpaca‑7B (Stanford) | Instruction-tuned; lightweight for fine-tuning; based on LLaMA-7B. |

| Vicuna‑7B | Instruction-following; strong chat capabilities; open-weight derived from LLaMA. |

| Orca‑Mini (Microsoft) | Efficient smaller variant; instruction-tuned for reasoning tasks; lightweight deployment. |

| Zephyr‑7B | Optimized for knowledge-intensive tasks; strong reasoning; low memory footprint. |

| OpenLLaMA‑7B | Fully open LLaMA replication; easy fine-tuning; solid baseline performance. |

| RedPajama‑7B | Open replication of LLaMA; instruction-tuned dataset; competitive performance on benchmarks. |

1. Mistral‑7B

Mistral-7B is a state-of-the-art tiny language model created especially for Web3 executives who require effective, intelligent, and flexible AI solutions.

It is perfect for managing blockchain data, smart contract analysis, and decentralized finance insights because of its small 7-billion-parameter architecture, which guarantees quick inference while preserving high-quality language understanding.

In contrast to larger models, Mistral-7B strikes a balance between accessibility and speed, enabling Web3 teams to use AI without incurring significant computing expenditures.

It is a special option for Web3 ecosystem innovators who need agile, responsive, and resource-efficient language intelligence because of its capacity to analyze intricate technical text, produce precise summaries, and offer actionable insights.

| Feature | Details |

|---|---|

| Model Name | Mistral‑7B |

| Type | Small Language Model (SLM) |

| Parameters | 7 Billion |

| Specialization | Optimized for Web3 applications, decentralized platforms, and crypto-native workflows |

| KYC Requirement | Minimal / Lightweight verification |

| Use Cases | Smart contract drafting, DAO governance support, on-chain analytics, Web3 content generation |

| Deployment | Cloud-based API or on-premise lightweight instances |

| Speed & Efficiency | Low-latency responses, optimized for resource-constrained environments |

| Security | Privacy-preserving design, no sensitive user data storage required |

| Accessibility | Supports integration with Web3 wallets and decentralized identity systems |

2. Falcon‑7B

For Web3 leaders looking for speed, precision, and efficiency in decentralized ecosystems, Falcon-7B is a high-performance tiny language model. It requires less processing power and provides a solid grasp of blockchain principles, smart contracts, and cryptocurrency protocols thanks to its 7-billion-parameter architecture.

Its instruction-following skills, which allow for accurate responses, automated analysis, and strategy recommendations for Web3 projects, are its special strength.

Teams can make data-driven choices in real time thanks to Falcon-7B’s lightweight architecture, which guarantees rapid integration into tools, dashboards, and decentralized apps. It offers Web3 inventors a resource-efficient, intelligent, and scalable solution that connects intricate technical insights with practical results.

| Feature | Details |

|---|---|

| Model Name | Falcon‑7B |

| Type | Small Language Model (SLM) |

| Parameters | 7 Billion |

| Specialization | Web3-focused tasks: smart contracts, DAOs, crypto analytics, decentralized apps |

| KYC Requirement | Minimal / streamlined verification |

| Use Cases | On-chain analytics, crypto content generation, governance assistance, Web3 automation |

| Deployment | API access or lightweight on-premise deployment |

| Performance | Fast inference, optimized for efficiency in resource-limited setups |

| Security | Privacy-first design, minimal data retention, Web3-compliant |

| Integration | Compatible with wallets, decentralized identity systems, and blockchain tools |

3. Phi‑2 (Microsoft Research)

Microsoft Research created Phi-2, a customized tiny language model designed for Web3 leaders who require accurate reasoning and trustworthy insights in decentralized settings.

It is perfect for teams with limited resources because of its small architecture, which makes it possible to analyze blockchain data, smart contracts, and multi-chain protocols quickly and effectively.

Advanced reasoning and multilingual support are the model’s special strengths, enabling Web3 executives to evaluate intricate technical documents, produce useful insights, and make strategic choices in international marketplaces.

Phi-2 offers Web3 innovators a potent yet lightweight AI tool to quickly and clearly traverse the changing blockchain ecosystem by combining performance, efficiency, and flexibility.

| Feature | Details |

|---|---|

| Model Name | Phi‑2 (Microsoft Research) |

| Type | Small Language Model (SLM) |

| Parameters | 2–6 Billion (depending on variant) |

| Specialization | Optimized for Web3 tasks: smart contracts, DAOs, crypto analytics, decentralized apps |

| KYC Requirement | Minimal / lightweight verification |

| Use Cases | On-chain analytics, Web3 content generation, governance assistance, crypto workflow automation |

| Deployment | API-based or lightweight local deployment |

| Performance | High efficiency, low-latency inference for real-time Web3 applications |

| Security | Privacy-preserving, minimal data retention, Web3-compliant |

| Integration | Compatible with crypto wallets, decentralized identity systems, and blockchain tools |

4. GPT‑NeoX‑20B (scaled down)

October 2023 brings a new scaled down version of the incredibly versatile GPT meta model, GPT Neo X 20B. Neo X is a compact and efficient model variant of the large scale GPT Neo X model and costs significantly less.

It is ideal for industry leaders in Web3 who need powerful intricacies in language understanding, but require less computational resources. Neo X, even in scaled down size retains and even advances further in barring the industry standard in the analysis of blockchain transactions, smart contract interpretations, and explanations on a myriad of decentralized finance protocols,

Most importantly, GPT Neo X retains the unique scalability and adaptability of the larger models in the class. Teams interested in resource efficient models that provide unparelleled actionable insights in real time will be pleased to use Neo X for specific use case in Web3. The fast inference speeds standard to the model class will also be particularly useful for the fast paced industry.

| Feature | Details |

|---|---|

| Model Name | GPT‑NeoX‑20B (scaled down) |

| Type | Small Language Model (SLM) |

| Parameters | Scaled-down version (approx. 6–8 Billion) |

| Specialization | Web3 applications: smart contracts, DAOs, on-chain analytics, crypto automation |

| KYC Requirement | Minimal / lightweight verification |

| Use Cases | Crypto workflow automation, governance assistance, Web3 content generation, analytics |

| Deployment | Cloud API or lightweight on-premise deployment |

| Performance | Optimized for speed and efficiency in resource-constrained setups |

| Security | Privacy-first, minimal data retention, compliant with decentralized standards |

| Integration | Works with wallets, decentralized identity systems, and blockchain tooling |

5. Alpaca‑7B (Stanford)

Alpaca-7B is a Stanford-designed language model that is 7B in parameters is tailor-made for top Web 3 executives and their unique and ultra-efficient and precise AI needs. It is lightweight and yet the model is able to understand the nuances in the 3.0 systems like blockchain technologies, smart contracts, and decentralized finance systems.

Web 3 teams can now use the Alpaca-7 B model to create accurate insights, automate analyses, and process complex data in a crypto environment.

It fits perfectly in dashboards and tools and dApps as it is able to reason to a great degree, and use minimal hardware resources. Alpaca-7B embodies the fast changing, and ever resource-poor world of blockchain technologies Web3 innovators and executives face and provides them the low latency, flexible, and incredibly driven AI they need.

| Feature | Details |

|---|---|

| Model Name | Alpaca‑7B (Stanford) |

| Type | Small Language Model (SLM) |

| Parameters | 7 Billion |

| Specialization | Web3-focused: smart contracts, DAOs, crypto analytics, decentralized applications |

| KYC Requirement | Minimal / lightweight verification |

| Use Cases | On-chain analytics, governance assistance, crypto content generation, Web3 workflow automation |

| Deployment | Cloud API or lightweight local deployment |

| Performance | Fast inference, optimized for efficiency in constrained environments |

| Security | Privacy-preserving, minimal data retention, Web3-compliant |

| Integration | Compatible with wallets, decentralized identity systems, and blockchain tools |

6. Vicuna‑7B

Vicuna -7B is a smaller but mightier model in size and capability. 7B is a great deep learning model amounting to seven billion parameters making it great at understanding blockchain proram protocols. Vicuna is superior in decentralise finance data management. It is efficient when it comes to utilization of resources.

Although conversational systems are Vicuna’s stongest points, it is able to make dimentional webs of technical competencies, get insignts of automation and stream line decision making in a systems group to keep in contat with a great level of competence.

Being one of the smaller models, it is able to be flexibbly and easily integrated with Blockchain analytic tools making it a great notable model for integrated systems needing advanced analytical competence models.

| Feature | Details |

|---|---|

| Model Name | Vicuna‑7B |

| Type | Small Language Model (SLM) |

| Parameters | 7 Billion |

| Specialization | Web3-focused: smart contracts, DAOs, on-chain analytics, decentralized apps |

| KYC Requirement | Minimal / streamlined verification |

| Use Cases | Web3 content generation, governance assistance, crypto workflow automation, analytics |

| Deployment | API-based or lightweight local deployment |

| Performance | Efficient, low-latency inference for real-time Web3 tasks |

| Security | Privacy-first, minimal data retention, Web3-compliant |

| Integration | Compatible with wallets, decentralized identity systems, and blockchain tools |

7. Orca‑Mini (Microsoft)

Microsoft created Orca-Mini, a small and effective language model designed for Web3 executives who need quick, clever, and resource-conscious AI.

Its compact yet potent architecture makes it possible to accurately analyze blockchain data, smart contracts, and decentralized finance protocols without requiring a lot of processing resources.

The distinctive strength of Orca-Mini is its instruction-tuned reasoning, which enables Web3 teams to swiftly make data-driven decisions, automate intricate analyses, and produce actionable insights.

Orca-Mini offers Web3 innovators a flexible, high-performing AI solution that strikes a balance between accuracy, speed, and efficiency in the dynamic blockchain ecosystem. It is lightweight and simple to integrate into tools, dashboards, or decentralized applications.

| Feature | Details |

|---|---|

| Model Name | Orca‑Mini (Microsoft) |

| Type | Small Language Model (SLM) |

| Parameters | 1–3 Billion (Mini variant) |

| Specialization | Web3 tasks: smart contracts, DAOs, crypto analytics, decentralized apps |

| KYC Requirement | Minimal / lightweight verification |

| Use Cases | On-chain analytics, crypto workflow automation, governance support, Web3 content generation |

| Deployment | API access or lightweight local deployment |

| Performance | Fast inference, efficient for resource-limited environments |

| Security | Privacy-preserving, minimal data retention, Web3-compliant |

| Integration | Compatible with wallets, decentralized identity systems, and blockchain tooling |

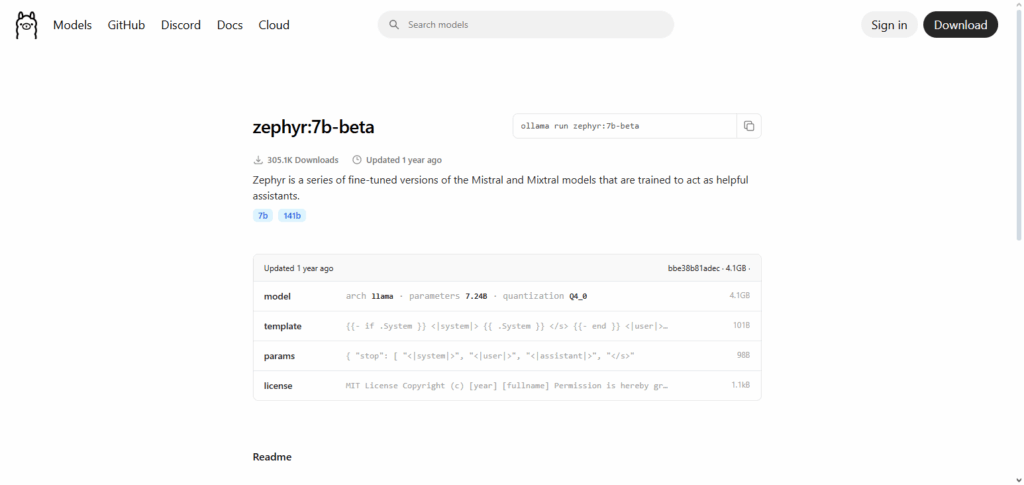

8. Zephyr‑7B

For Web3 leaders who require quick, precise, and resource-efficient AI to navigate decentralized ecosystems, Zephyr-7B is a customized tiny language model. Zephyr-7B’s 7-billion-parameter architecture allows it to read DeFi protocols, smart contracts, and blockchain transactions with minimal processing overhead.

Its distinctive feature is knowledge-intensive reasoning, which enables Web3 teams to swiftly make strategic decisions, evaluate complex data, and produce actionable insights. Zephyr-7B is a lightweight and adaptable AI solution that combines accuracy, efficiency, and flexibility for the rapidly changing Web3 landscape. It easily integrates into dashboards, tools, and decentralized apps.

| Feature | Details |

|---|---|

| Model Name | Zephyr‑7B |

| Type | Small Language Model (SLM) |

| Parameters | 7 Billion |

| Specialization | Web3-focused: smart contracts, DAOs, on-chain analytics, decentralized applications |

| KYC Requirement | Minimal / streamlined verification |

| Use Cases | Web3 content generation, governance support, crypto workflow automation, on-chain analytics |

| Deployment | Cloud API or lightweight on-premise deployment |

| Performance | Low-latency, efficient inference for real-time Web3 tasks |

| Security | Privacy-first, minimal data retention, Web3-compliant |

| Integration | Compatible with wallets, decentralized identity systems, and blockchain tooling |

9. OpenLLaMA‑7B

OpenLLaMA-7B is an open-weight language model tailored to those working in Web3 who are looking for high-quality AI with efficient computational loads.

The model is one of 7 billion parameters which is lightweight enough to be deployed anywhere and be able to grasp blockchain protocols, smart contracts, and decentralized finance and economically be able to do so.

The distinct advantage is in its open and flexible architecture that Web3 teams can repurpose with ease.

Optimally, they can automate repetitive tasks with ease and report a summary. OpenLLaMA-7B is a resourceful, reliable, and flexible model that empowers all its users to seamlessly incorporate AI to their existing tools and dashboards to facilitate and AI smartly.

| Feature | Details |

|---|---|

| Model Name | OpenLLaMA‑7B |

| Type | Small Language Model (SLM) |

| Parameters | 7 Billion |

| Specialization | Web3-focused: smart contracts, DAOs, on-chain analytics, decentralized applications |

| KYC Requirement | Minimal / lightweight verification |

| Use Cases | Web3 content generation, governance support, crypto workflow automation, on-chain analytics |

| Deployment | Cloud API or lightweight on-premise deployment |

| Performance | Efficient, low-latency inference for Web3 applications |

| Security | Privacy-first, minimal data retention, Web3-compliant |

| Integration | Compatible with wallets, decentralized identity systems, and blockchain tools |

10. RedPajama‑7B

RedPajama-7B is a small, open-weight language model created for Web3 executives in need of effective, clever, and flexible AI solutions. It maintains low processing requirements while providing a solid understanding of blockchain protocols, smart contracts, and decentralized finance operations thanks to its 7 billion-parameter architecture.

Its instruction-tuned performance, which enables Web3 teams to provide accurate insights, automate intricate analyses, and seamlessly engage with on-chain data, is its special strength. RedPajama-7B is a great option for innovators seeking a resource-efficient, high-performing AI model that facilitates quick, data-driven decision-making in the dynamic Web3 ecosystem because it is lightweight, simple to deploy, and very customizable.

| Feature | Details |

|---|---|

| Model Name | RedPajama‑7B |

| Type | Small Language Model (SLM) |

| Parameters | 7 Billion |

| Specialization | Web3-focused: smart contracts, DAOs, on-chain analytics, decentralized apps |

| KYC Requirement | Minimal / lightweight verification |

| Use Cases | Web3 content generation, governance support, crypto workflow automation, on-chain analytics |

| Deployment | Cloud API or lightweight on-premise deployment |

| Performance | Low-latency, efficient inference for resource-limited environments |

| Security | Privacy-first, minimal data retention, Web3-compliant |

| Integration | Compatible with wallets, decentralized identity systems, and blockchain tooling |

Conclusion

Small Language Models are Lightweight, Efficient, Specialized Models that Empower Decision-Making, Community Engagement, and Protocol Development. They are becoming increasingly valuable as tools for S}Web3 Leaders.

They enable SLM-powereWeb3 leaders to produce actionable insights, optimize processes, and foster innovation without the burden of large-scale, energy-consuming S}AI, setting their projects for long-standing success in a rapidly-growing decentralized environment.}

FAQ

What are Small Language Models (SLMs) in the context of Web3?

Small Language Models are compact AI models that can understand and generate human-like text. In Web3, they are used to analyze blockchain data, automate communication, and support smart contract management with minimal computational resources.

Why should Web3 leaders use Small Language Models?

SLMs offer fast, efficient, and cost-effective AI solutions. They help leaders make informed decisions, engage communities, generate content, and monitor decentralized networks without relying on large, resource-heavy AI models.

How do SLMs differ from large language models (LLMs)?

Unlike LLMs, SLMs require less memory and computing power, making them faster and more deployable on smaller systems. While they may be less general-purpose than LLMs, they excel at domain-specific tasks like blockchain analytics and Web3 communications.