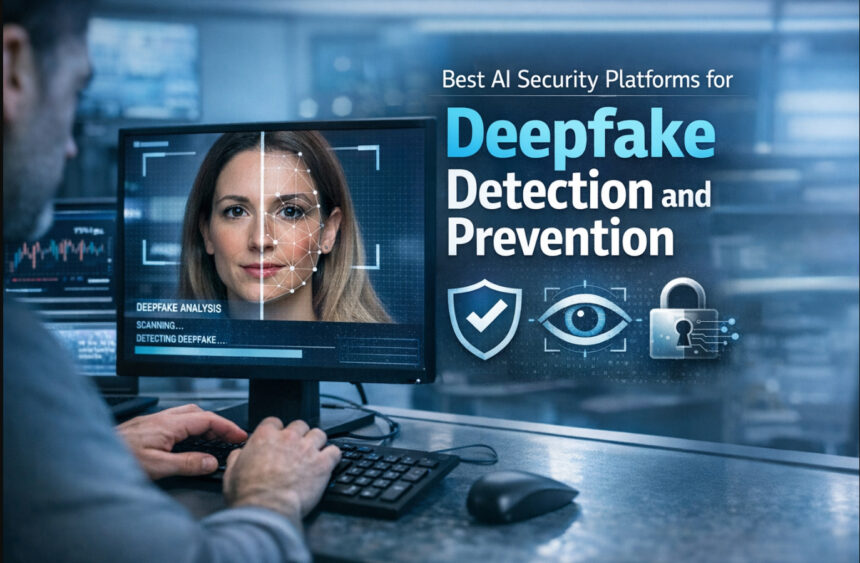

Deepfakes: Evolving digital threats pose a significant risk to cybersecurity, identity protection, and online trust. Top AI Security Platforms for Deepfake Detection and Prevention are revolutionizing the way organizations identify altered media, avoid deception, and protect communication platforms.

By merging advanced AI technologies, real-time analysis and content verification methods, these platforms enable businesses, governments and individuals to shield themselves against the growing frequency of increasingly sophisticated synthetic media attacks in the virtual world where we live.

What Are Deepfakes and Why They Are Dangerous?

DeepFakes are videos, images or audio that have been digitally altered using complicated AI techniques Philosophically which include Deep Gaining Knowledge and Generative Adversarial Networks (GANs).

These technologies enable creators to realistically substitute faces, clone voices—even create something completely fictional that looks real. In addition, since deepfakes can impersonate actual individuals—whether celebrity, politician, or executive—it becomes increasingly difficult to tell what media is authentic and what is imitation.

The Danger Of Deepfakes

Identity Theft and Financial Fraud

Deepfakes were also used to impersonate people in order to deceive employees or systems, resulting in employment scams such as the fake CEO call or scam that uses video as a weapon.

Misinformation and Fake News

Manipulated videos could then mislead public perception, showing people in authority saying or doing things they never said or did.

Reputation Damage

Deepfake content that depicts a person (individuals or brands) performing unethical, immoral, illegal actions can cause serious harm.

Cybersecurity Threats

With the emergence of deepfakes, cyber threats have never been more advanced as they are now because criminals use this technology in phishing, social engineering and identity spoofing attacks.

Erosion of Trust

Deepfakes will continue to improve, and people may even question real content, resulting in a so-called “crisis of trust” over digital content.

Key Point

| AI Security Platform | Key Point (Deepfake Detection & Prevention Capability) |

|---|---|

| CloudSEK | Provides real-time deepfake detection with strong threat intelligence, helping organizations identify synthetic media attacks and prevent impersonation fraud at scale. |

| Sensity AI | Offers multimodal detection (video, audio, image, text) with 95–98% accuracy, widely used for threat intelligence, identity protection, and compliance monitoring. |

| Reality Defender | Detects deepfakes across video, audio, images, and text in real time, focusing on enterprise fraud prevention and communication security. |

| FrameSentinel | Specialized in video KYC and identity fraud detection using multiple AI models with fast API-based analysis for real-time prevention. |

| Intel FakeCatcher | Uses biological signals (blood flow detection via PPG) to identify deepfake videos with high real-time accuracy and advanced hardware integration. |

| OpenAI Deepfake Detector | Detects AI-generated images (especially DALL·E outputs) using embedded metadata and watermarking for authenticity verification. |

| Deeptrace AI | One of the earliest deepfake detection platforms, leveraging machine learning to monitor and identify synthetic media threats globally. |

| Truepic | Uses blockchain-based verification to ensure authenticity of images and videos, preventing tampering and misinformation. |

| Sentinel | Combines AI and computer vision to detect manipulated media and flag suspicious content for cybersecurity and media verification use cases. |

| Modulate (Velma AI) | Focuses on voice deepfake detection by analyzing tone, emotion, and speech patterns to identify synthetic or manipulated audio content. |

1. CloudSEK

CloudSEK is a pioneer in AI assisted Cybersecurity recognized for real-time deepfake detection and digital risk scanning. Fielding uses state of the art machine learning models to observe video, audio and image data for manipulation signatures, metadata abnormalities, and behavioral irregularities.

The platform uses threat intelligence that follows impersonation campaigns and synthetic identity fraud across the dark web. In the mid-layer of its workflow, CloudSEK matches deepfake signals against attack infrastructure and guide security teams in defending against fraud pre-emptively. Enterprise use is for securing communications, brand identity and executive impersonation threats.

Best For:

- Brand reputation protection

- Dark web monitoring

- Executive impersonation detection

- Digital risk intelligence

Key Strengths:

- Real-time threat intelligence

- External attack surface monitoring

- AI-driven fraud detection

- Deepfake + phishing correlation

Use Cases:

- CEO fraud prevention

- Social media impersonation tracking

- Phishing campaign detection

- Brand abuse monitoring

2. Sensity AI

Sensity AI is a one-stop-shop for deepfake detection utilizing multi-layer forensic techniques to put out signs of manipulation — be it video, audio or image formats. It detects face swaps, lip-sync manipulations, and synthetic voices by analyzing pixel-level inconsistencies, voice patterns and the structure of audio files.

During the mid-stage of processing, Sensity produces forensic evidence and confidence scores that are appropriate for legal compliance. It also supports cloud, on-premise and offline deployments for scalability. Common use cases include KYC, law enforcement and media authentication workflows.

Best For:

- Forensic deepfake analysis

- Compliance verification

- Media authentication

- Law enforcement use

Key Strengths:

- Multimodal detection (video/audio/image)

- High forensic accuracy

- Detailed reporting system

- Scalable deployment options

Use Cases:

- KYC verification

- Legal investigations

- Fake content detection

- Evidence validation

3. Reality Defender

Reality Defender is an enterprise-class deepfake detection platform built to prevent threats in real-time across video, audio, images and text. The dynamic employs multimodal AI models trained on real and synthetic datasets to instantaneously identify impersonation attempts.

It then detects malware before it propagates using inference-based detection and applies confidence scoring in the mid-layer of its system.

It provides an API/SDK for developers to integrate detection into their apps. It has become the standard for guarding financial organizations, government systems and company communications against AI-driven fraud.

Best For:

- Enterprise security

- Real-time communication protection

- Fraud prevention

- API-based integration

Key Strengths:

- Real-time detection engine

- Multimodal AI models

- Easy API/SDK integration

- High-speed processing

Use Cases:

- Secure video conferencing

- Financial fraud detection

- Email and media verification

- Corporate communication safety

4. FrameSentinel

FrameSentinel is the AI — train on data until October 2023, dedicated solely to detect identity crime by deepfake in video KYC. It has various detection modules running in parallel that can detect face swaps, replay attacks, and synthetic identities within seconds.

Upon being placed in the mid-processing stage, the platform runs several AI models at once and returns results via real-time API integration. It is Especially optimized for instant identity verification used in capturing small fintech, banking and onboarding systems.

Thanks to its fast processing capabilities and architecture focused on privacy by design, FrameSentinel offers a secure and widely scalable identity verification solution and reduces the chances of fraud in digital onboarding workflows.

Best For:

- Video KYC verification

- Fintech onboarding

- Identity fraud detection

- Secure authentication

Key Strengths:

- Real-time video analysis

- Multi-model detection system

- Fast API responses

- Privacy-focused design

Use Cases:

- Digital onboarding

- Identity verification checks

- Fraud detection in banking

- Secure customer authentication

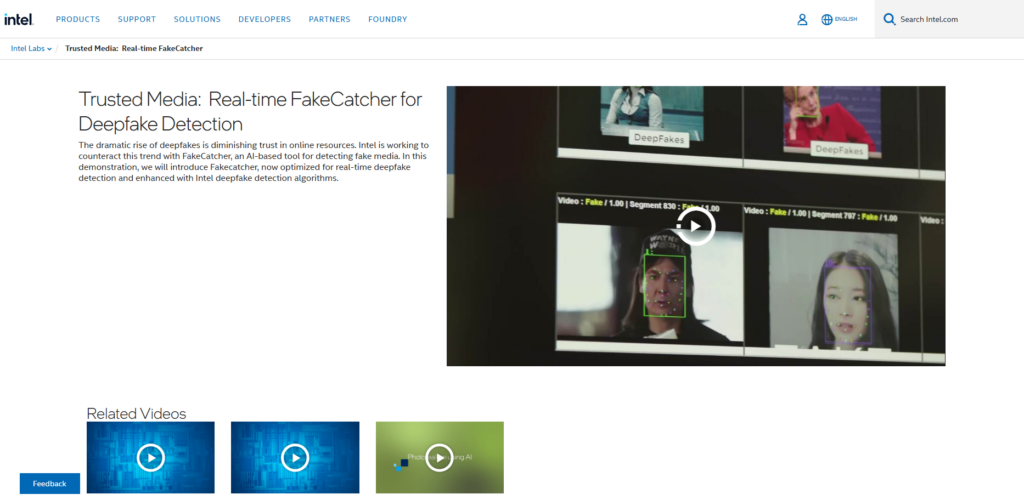

5. Intel FakeCatcher

Intel FakeCatcher uses biological signal analysis in its fake video detection system. It uses photoplethysmography (PPG) to find the subtle blood flow patterns in facial videos that AI-generated content can’t really replicate, unlike more common tools.

Not to mention, the mid-analysis step assesses physiological signals and visual data to enhance acuity. This method guarantees high-confidence verification, especially in secure settings such as finance and identity authentication.

FakeCatcher has real-time performance and integrates with hardware as well, which it considers to be a practical competency for high-security verification systems.

Best For

- High-security environments

- Video authenticity verification

- Government systems

- Biometric analysis

Key Strengths:

- Blood flow (PPG) detection

- Real-time processing

- High accuracy verification

- Hardware-level optimization

Use Cases:

- Identity verification

- Secure access systems

- Video authenticity checks

- Fraud protection in sensitive industries

6. OpenAI Deepfake Detector

OpenAI Deepfake Detector OpenAI Deepfake Detector Page deepfakes The main focus of this detection tool is actually AI-generated images and any synthetic content that can be verified using watermarking techniques and metadata.

It uses built-in signals in generated media to establish authenticity and trace lineage. While the upper layer of detection is focused on visual artifacts, mid-layer relies upon content provenance and generation patterns.

This method increases authenticity and reliability, making it more effective in situations such as for AI-generated image models like DALL·E, content integrity mechanisms for media platforms and digital rights management tools that help organizations efficiently identify and separate always real media from artificial-produced outputs.

Best For:

- AI-generated image detection

- Content authenticity verification

- Media platforms

- Digital rights protection

Key Strengths:

- Watermark-based detection

- Metadata analysis

- AI content tracing

- Scalable detection models

Use Cases:

- Fake image detection

- Content moderation

- Media verification

- AI-generated content identification

7. Deeptrace AI

Deeptrace AI is one such early platform for detecting and tracking deep fake content on the web. The platform uses a project that scans and detects synthetic media with machine learning models, allowing the following of its reach and origin.

Deeptrace is designed to operate at the middle-stage of analysis, where pattern recognition and large-scale monitoring will be utilized for detecting coordinated misinformation campaigns. It is the leader in media intelligence, brand protection, and threat analysis.

And its real strength lies in the ability to monitor and measure deepfake ecosystems on a global scale — enabling organizations to understand, prepare for, and respond safely to emerging synthetic media threats.

Best For:

- Global deepfake monitoring

- Threat intelligence

- Media tracking

- Misinformation analysis

Key Strengths:

- Large-scale scanning

- Pattern recognition

- Threat tracking capability

- Continuous monitoring

Use Cases:

- Fake news detection

- Brand monitoring

- Online threat analysis

- Deepfake trend tracking

8. Truepic

Truepic founded a content authenticity platform to combat deepfakes through tamper-proof images and videos. It, therefore, verifies the integrity of media through cryptographic signatures and blockchain-based technology from capture down to the viewer or consumer end.

Truepic performs this verification in the mid-layer, checking metadata, timestamps and device authenticity until it can be certain that content has not been altered.

It does so through a proactive approach, which differs from conventional detection by verifying trust at the source itself. The same is important in insurance, finance, journalism as the verified visual proof carries another level of importance when it comes to analysis and compliance.

Best For:

- Content verification at source

- Legal evidence validation

- Insurance claims

- Trusted media capture

Key Strengths:

- Cryptographic verification

- Blockchain-based trust

- Tamper-proof media

- Secure capture technology

Use Cases:

- Insurance documentation

- Journalism verification

- Legal evidence submission

- Field data validation

9. Sentinel AI

Sentinel AI, which helps detect identity spoofing and deepfake attacks using synthetic personas. It leverages on-device computer vision and real time AI models to analyze manipulated media as well as the risk levels in specific identity verification workflows.

Sentinel, it seems, augments fraud protections by adding detection results to behavioral and device intelligence in the mid-stage of its system. It fits best for onboarding, login authentication, and account recovery types of cases.

And the platform identifies real-time impersonation attempts that are intermediate deepfake driven and provides organizations with the tools and insights they need to prevent identity fraud and unauthorized access.

Best For

- Identity fraud detection

- Account security

- Authentication systems

- User verification

Key Strengths:

- Computer vision analysis

- Behavioral intelligence

- Real-time detection

- Risk scoring system

Use Cases:

- Login protection

- Account recovery security

- Fraud detection systems

- Identity verification workflows

10. Modulate (Velma AI)

In the world of social media, Modulate’s Velma AI is a targeted platform for identifying voice deepfakes and synthetic speech in real-time communications. It detects inconsistencies by examining tone, emotion, speech patterns and acoustic signals to distinguish AI-generated voices.

Velma utilizes behavioral voice modeling in the mid-processing layer to analyze and differentiate between real human speech, manipulated audio and audio generated through cloning.

Popular in gaming, customer service and online platforms to create safe spaces for communication. It is a pillar in mitigating voice-based fraud, harassment and impersonation attacks across digital ecosystems.

Best For:

- Voice deepfake detection

- Online communication safety

- Gaming platforms

- Voice moderation

Key Strengths:

- Real-time voice analysis

- Emotion detection

- Speech pattern recognition

- AI voice moderation

Use Cases:

- Voice fraud detection

- Gaming chat moderation

- Call center security

- Audio authenticity verification

Key Features to Look for in AI Deepfake Detection Platforms

Real-Time Detection Capability

The platform must analyze and identify deepfakes in real-time as they are uploaded or streamed live, allowing for prevention of fraud, misinformation and security threats before it reaches the user or system.

Multimodal Analysis Support

The best platforms will analyze video, audio, images and text at the same time to guarantee that they can detect deepfakes through multiple media types—not just one.

Lowest False Positive Rate with High Accuracy

An effective system should provide a high level of accuracy in results with a low percentage of false positive detections, allowing the security operators to ensure that no genuine content is marked incorrectly while maintaining trust in the operations.

API and System Integration

The platform needs to have highly flexible APIs and SDKs, enabling out-of-the-box integration into existing security systems, applications, and workflows with minimal disruption of business processes that eliminates the hassles of a complex implementation process.

Scalability and Performance

The solution must support and process large volume of data efficiently, while providing enterprise level scalability, consistent performance at high traffic environments, real-time processing (Zero Latency), multiple detection requests concurrently.

Advanced Forensic Analysis

Robust platforms offer pixel-level forensics, voice pattern detection and metadata evaluation enabling a detailed investigation of the suspected deepfakes and vetting them.

Compliance and Reporting Tools

Integration of comprehensive reporting, audit trails and compliance functionality for legal requirements and regulatory standards in alignment to internal security audits to drive transparency & accountability across the detection along with the support each through automated reports.

Benefits of Using AI Deepfake Detection Platforms

Prevents Financial Fraud

Detection Platforms – AI deep fake detection platforms assist with impersonation attacks, unattended video calls and voice scams that can prevent organizations and individuals from incurring financial losses due to accidental fraudulent transactions or intentional social engineering schemes.

Protects Brand Reputation

These platforms identify media that has been altered in a way that targets brands or senior leaders, stopping the spread of malicious misinformation and reputation management from fake videos, images or statements being shared over social platforms and digital channels.

Enhances Cybersecurity Defense

Deepfake identification protects many websites by adding another step in the cybersecurity framework, providing additional protection against phishing, identity spoofing, and advanced social engineering attacks on sensitive systems.

Ensures Content Authenticity

The organizations can check if the Media Content is Original or Manipulated which increases trust in digital communications, journalism, and online platforms as authenticity is crucial to credibility and decision-making processes.

Supports Legal and Compliance Needs

Through these tools, organizations get forensic evidence, audit trails, and digital reports that enable them to answer the citations involving regulatory compliance while efficiently identifying crime involving anything from misinformation and identity crime to fraud.

Secures Digital Identity Verification

Deepfake detection uses new systems for identity verification to block the use of fake identities at onboarding and login or KYC phases, protecting sensitive services and platforms by ensuring that only real users truly access them.

Builds Trust in Digital Ecosystems

Such platforms assist in restoring trust by limiting the dissemination of manipulated content, thereby enabling secure communication, dependable information sharing, and increased confidence in digital technologies across sectors.

Challenges and Limitations

Evolving Deepfake Technology

The rapid advancements in tools for deepfake generation means that detection systems are also struggling to keep pace as new approaches continuously outsmart any existing algorithms leading to decreased performance of current level detectors.

Accuracy and False Positives

Detection may misidentify real content as fake or miss new generations of deepfakes, creating distrust, increasing operating overheads and exposing critical decision environments to unexpected risks.

High Implementation Cost

Most advanced deepfake detection technologies potentially demand heavy investment in infrastructure, licensing and integration that prohibit small businesses or relatively smaller organization with less cybersecurity experience from leveraging.

Limited Real-Time Capabilities

Platforms that work in real-time may experience delays when processing large amounts of data, making it difficult to be effective against inlive fraud, payment streaming manipulation or immediate threats from communication platforms.

Data Privacy Concerns

Privacy concerns can arise when sensitive audio, video and personal data is analysed since many platforms also store or process information externally — creating compliance risks with data protection legislation.

Lack of Standardization

Deepfake detection is still not governed by universal standards, resulting in inconsistencies between results from different platforms and differences in performance that organizations will find difficult to compare or trust.

Dependency on Training Data

Deepfake detection systems rely on training datasets and data limitations or bias could degrade their accuracy, especially with the exposure for the first time of a new deepfake creation technique in real-world conditions.

Conclusion

As the threat of synthetic media continues to grow through sectors, AI deepfake detection platforms are vital. Improvements like multimodal analysis, real-time detection and forensic intelligence are evident in tools like CloudSEK, Sensity AI and Reality Defender.

Meanwhile, solutions such as Intel FakeCatcher and Truepic showcase the movement towards new techniques like human biological signal analysis for authenticating content at its origin.

Still, even this data reveals that none of the platforms are fool-proof. The fast-evolving techniques of deepfake, high rate/number of false positives and absence of thorough standards necessitate the use of a multi-layered security model in organizations.

Effective protection can only be achieved by taking advantage of detection tools in conjunction with identity verification, awareness and cybersecurity frameworks.

Thus, eventually. the important step of investing in the right AI deepfake detection platform does not just prevent frauds and misinformation but also reinforces digital trust which leads to safe communication and long-term boost up with reliable digital ecosystems.

FAQ

What is the best AI platform for deepfake detection?

There is no single “best” platform, as it depends on use case. Sensity AI excels in forensic analysis, while Reality Defender is strong in real-time detection, and CloudSEK focuses on threat intelligence and brand protection.

How accurate are AI deepfake detection platforms?

Most advanced platforms achieve high accuracy using multimodal AI, but performance varies depending on content quality and complexity. Even top tools like Intel FakeCatcher can face challenges with highly sophisticated deepfakes.

Can AI completely prevent deepfake attacks?

No, AI cannot completely eliminate deepfakes. Platforms like Truepic help prevent manipulation at the source, but a combination of detection, verification, and cybersecurity practices is required for full protection.

Which industries need deepfake detection the most?

Industries such as finance, cybersecurity, media, government, and e-commerce benefit significantly. Tools like FrameSentinel are widely used in fintech, while media companies rely on detection for content authenticity.

What types of deepfakes can these platforms detect?

Most platforms detect video, audio, image, and sometimes text-based deepfakes. For example, Modulate (Velma AI) specializes in voice deepfake detection, while others provide full multimodal coverage.